Most startups begin with a lean infrastructure, Rover is no exception to this. Over time the organization grows, systems become more complex, and the expectations of the business evolve. At times change is implemented gradually and incrementally, and at times it necessitates large actions. Rover reached a point recently where it became evident that using tooling and infrastructure within a single AWS account was limiting. To allow the engineering organization to be effective while maintaining and improving best practices we opted to segregate our multiple environments at the Account level as opposed to VPCs and granular intra-account IAM permissions and policies.

The resultant effort was a large, complicated piece of work that had many components. Arguably the most challenging aspect was being able to easily move the system of record for our OLTP relational databases across accounts and regions with minimal downtime while managing the least possible risk to data integrity. The path we took follows below.

Cross Account Replication

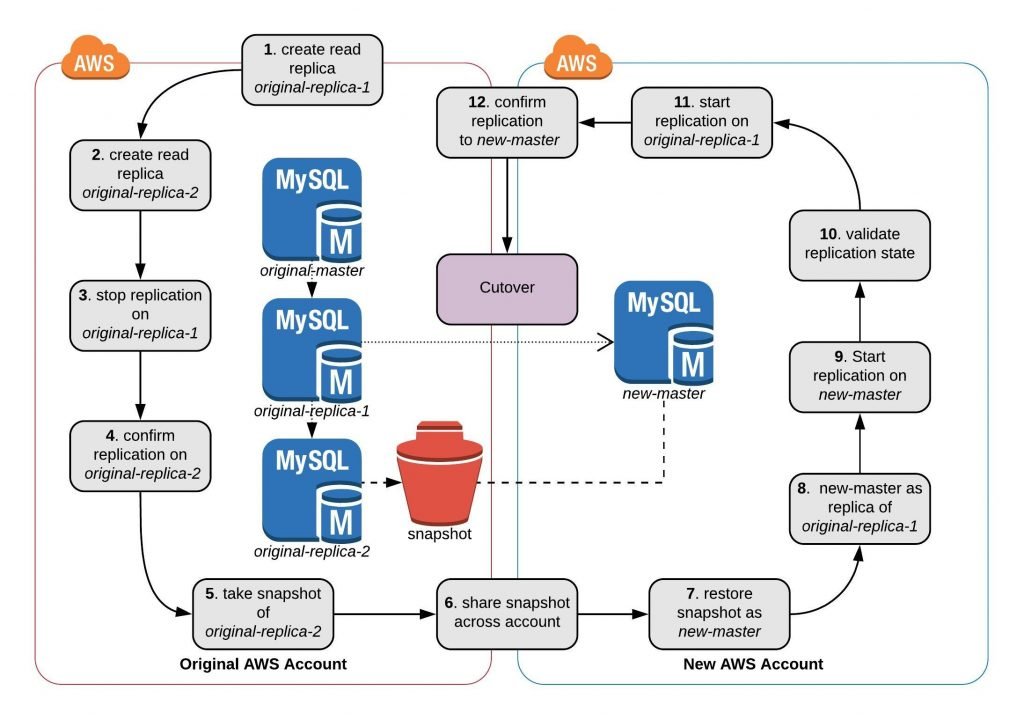

Migrations between AWS accounts are generally unwieldy on the whole and specifically there is no supported cross-account replication functionality provided by RDS. Out of several options, we chose to use snapshots to create a replication chain crossing both AWS accounts and regions.

Using Snapshots

This extrapolates from the AWS documentation for external replication and importing via replication that by using the rds replication functions one can isolate control of meaningful changes to the binlog. We manipulate a read replica into a state where we can create a binlog consistent snapshot, restore that to the new account, and configure replication from that newly restored master pointed back to our original account.

RDS Cross Account Replication Steps

These steps are elaborated upon below:

Step 1: Create Read Replica (original-replica-1) from Master (original-master)

- No other uses as you’re going to stop replication on this. Its sole purpose is to serve binlog across accounts.

Step 2: Create Read Replica (original-replica-2) from Read Replica

- This is the one you’ll end up taking a snapshot from.

Step 3: Stop Replication on Read Replica (original-replica-1)

- Execute “call mysql.rds_stop_replication” via your favorite sql client.

- Execute “show master status” and take note of your current binlog position.

RDS will now show this instance as unavailable (hence why we need the second replica for a snapshot).

Step 4: Validate Read Replica (original-replica-2) has reached binlog consistency with Read Replica (original-replica-1)

- Execute “show slave status” on original-replica-2. Continue once the binlog file / position from original-replica-1 has been passed. This likely will have occurred already.

While binlog will continue to accumulate from rds internals, the meaningful binlog on your databases will have been consumed.

Step 5: Snapshot Read Replica (original-replica-2)

- Using the RDS console, take a manual snapshot of original-replica-2.

Step 6: Share snapshot to new account (and copy across regions if necessary)

Step 7: Restore snapshot into new account (new-master)

Step 8: Set Master (new-master) to replicate from Read Replica (original-replica-1)

- Execute “call mysql.rds_set_external_master” on new-master with your appropriate values. Your master will be original-replica-1 and binlog position as noted in Step 3.

Step 9: Start Replication on Master (new-master)

- Execute “call mysql.rds_start_replication” on new-master

Step 10: Validate Replication State

- Execute “show slave status” on new-master. Ensure replication fires up and no errors have arisen.

At this point any issues can be addressed without re-snapshotting so its best to identify them now.

Step 11: Start Replication (original-replica-1)

- Execute “call mysql.rds_start_replication” on original-replica-1

Step 12: Confirm Replication (new-master)

- Once your chain is replicating, original-replica-2 may be deleted

At this point, you should see binlog pass through original-replica-1 across to new-master.

Note that new-master will accept writes, so take care in how you test both the database and the application stack.

Optional Fallback

Repeat steps 1 through 12 going back to the old account. This may be useful if issues arise during cutover that require moving back to your old account. Any interim transactions executed in the new account during application failover would be replicated back into your old account, with just the need to change endpoint DNS.

Cutover

Step 13: Disable Writes to Master (original-master)

- Apply a security group limiting access to only controlled clients, remove all other security groups. This allows administration while blocking everyone else. Or other suitable implementation to control write access.

- Execute “show master status” on original-master to collect your binlog file/position.

Step 14: Validate binlog position between Masters (original-master and new-master)

- Execute “show slave status” on new-master and compare to binlog position acquired in Step 13.

Step 15: Stop Replication on Master (new-master)

- Execute “call mysql.rds_stop_replication” on new-master.

Step 16: Reset Replication on Master (new-master)

- Execute “call mysql.rds_reset_replication” on new-master.

- Once this is performed, all references to the replication configuration will be nulled out.

Step 17: Cutover Complete.

Our Outcome

We achieved a fully deployed RDS environment across accounts with sub-second replica lag. This was a major factor in allowing us to move our production environment over to a new AWS account and region with a cumulative 17 minute maintenance outage.

The Other Options

Snapshot and Restore

This is implemented by turning off the application, taking a snapshot, sharing the snapshot with the new account, then bringing up a new master database in said account.

Pros: Clicking buttons is easy

Cons: Extended downtime, further enhanced by our usage of multiple read replicas

Taking a snapshot is inconsistent in timing and could take a few minutes to near an hour. Restoring a database has similar challenges, and we also have replica pools to build. All in all, this would have incurred extensive downtime and we would have been unable to support our users during this process.

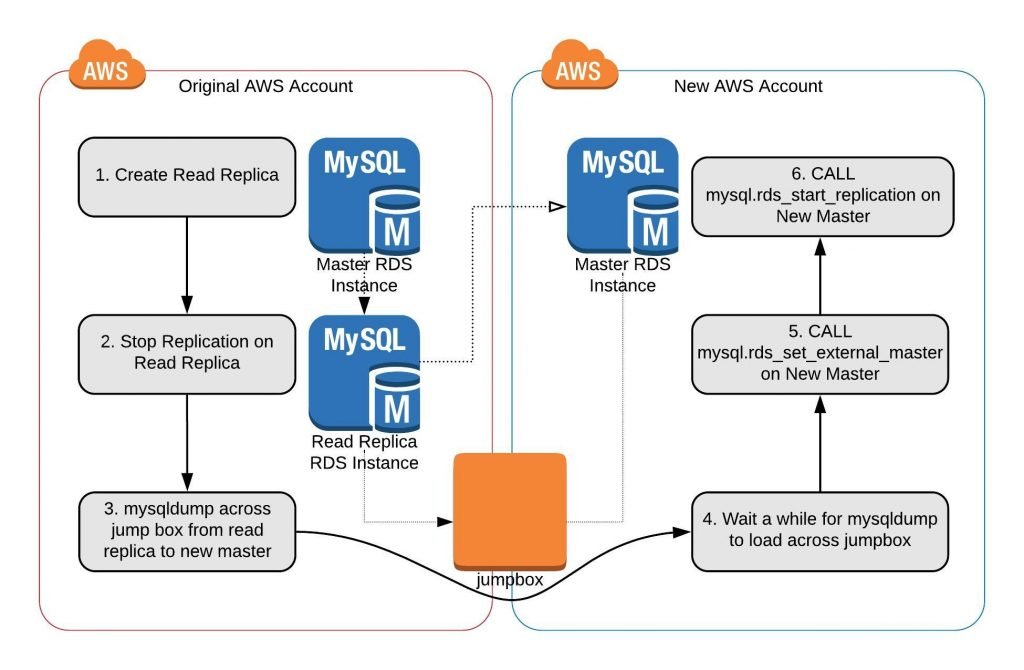

Cross Account Replication – mysqldump

This is probably the most prevalent source of existing knowledge addressing this challenge. The process basically involves using the AWS documentation around external replication and importing via replication, with the caveat that it is RDS to RDS as opposed to RDS to External or vice versa. Outside of this documentation, there are many examples around the Internet.

RDS Replication via MySQLdump

Pros: While this presents in all appearances as a slightly brittle process, it seems fundamentally sound if executed with care.

Cons: MySQL dump / restore can be a long process; ensuring not to cross-contaminate rds instance artifacts.

In rds to external replication, it doesn’t matter if some RDS specific configurations, settings, or tables get sent across as it would in theory be ignored. We set this up with a clean RDS instance in our new account and piping the mysql dump through a jump box that could talk to both instances. Note that we accumulated 16 hours of binlog that had to be processed once we turned replication back on (you should do this from a read replica that has replication paused). Ultimately this solution, while viable, was proven less desirable due to spiking replica lag amongst other concerns.

Cross Account Replication – AWS Database Migration Service

DMS provides the capability to both replicate a database as well as present ongoing changes via CDC. While this is a useful ETL tool and can provide some capabilities for disaster recovery, it was evaluated and not considered adequate as it is primarily focused on shipping schema and data as opposed to a complete replication of a database system and all the ancillary artifacts.

Documentation and Resources

https://docs.aws.amazon.com/AmazonRDS/latest/UserGuide/MySQL.Procedural.Exporting.NonRDSRepl.html

https://docs.aws.amazon.com/AmazonRDS/latest/UserGuide/MySQL.Procedural.Importing.External.Repl.html

https://docs.aws.amazon.com/AmazonRDS/latest/UserGuide/Appendix.MySQL.SQLRef.html

https://dev.mysql.com/doc/refman/5.6/en/replication-configuration.html